How Convolution Works

A guided tour through convolution in two dimensions for convolutional neural networks and image processing

End-to-End Machine Learning Course 322: https://e2eml.school/322

Tutorial on convolution in one dimension: https://e2eml.school/convolution_one_d.html

Code for the animations: https://gitlab.com/brohrer/convolution-2d-animation

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from Brandon Rohrer · Brandon Rohrer · 57 of 60

1

2

2

3

3

4

4

5

5

6

6

7

7

8

8

9

9

10

10

11

11

12

12

13

13

14

14

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

33

33

34

34

35

35

36

36

37

37

38

38

39

39

40

40

41

41

42

42

43

43

44

44

45

45

46

46

47

47

48

48

49

49

50

50

51

51

52

52

53

53

54

54

55

55

56

56

▶

▶

58

58

59

59

60

60

Robot Learning with a Biologically-Inspired Brain (BECCA)

Brandon Rohrer

BECCA talk at AGI 2011

Brandon Rohrer

Robot Learning with a Biologically-Inspired Brain (BECCA), The Sequel

Brandon Rohrer

BECCA listens to The Hobbit

Brandon Rohrer

Learning the building blocks of speech: BECCA extracts a hierarchy of audio features

Brandon Rohrer

BECCA listens for sound effects in The Hobbit

Brandon Rohrer

BECCA finds movie trailers while watching the Big Bang Theory

Brandon Rohrer

Listening for unexpected sounds: BECCA detects anomalies in audio data

Brandon Rohrer

Learning the building blocks of vision: BECCA extracts a spatio-temporal hierarchy of features

Brandon Rohrer

Watching for the unexpected: BECCA detects anomalies in video data

Brandon Rohrer

BECCA finds a stationary target

Brandon Rohrer

BECCA finds a stationary target at 3X speed

Brandon Rohrer

BECCA watches the X-men and Bruce Lee

Brandon Rohrer

BECCA plays Quidditch

Brandon Rohrer

BECCA chases a ball

Brandon Rohrer

BECCA chases a ball, part 2

Brandon Rohrer

Becca chases a ball, part 3

Brandon Rohrer

BECCA creates features from MNIST

Brandon Rohrer

How reinforcement learning works in Becca 7

Brandon Rohrer

Deep Learning Demystified

Brandon Rohrer

How Data Science Works

Brandon Rohrer

How Convolutional Neural Networks work

Brandon Rohrer

How Bayes Theorem works

Brandon Rohrer

How Deep Neural Networks Work

Brandon Rohrer

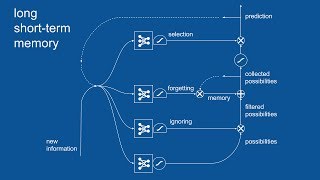

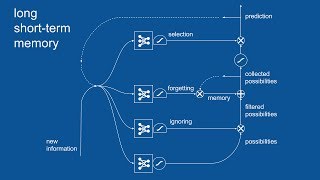

Recurrent Neural Networks (RNN) and Long Short-Term Memory (LSTM)

Brandon Rohrer

How Support Vector Machines work / How to open a black box

Brandon Rohrer

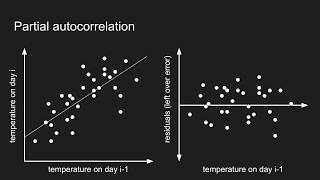

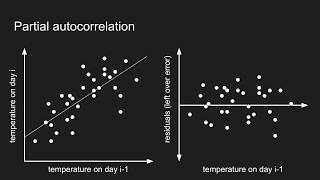

How autocorrelation works

Brandon Rohrer

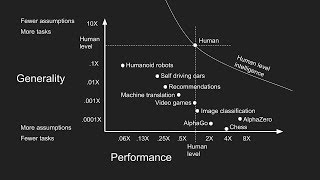

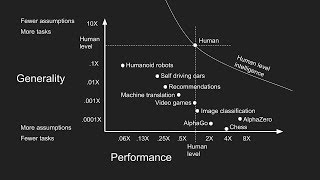

Getting closer to human intelligence through robotics

Brandon Rohrer

A minimalist's guide to slicing and indexing pandas DataFrames

Brandon Rohrer

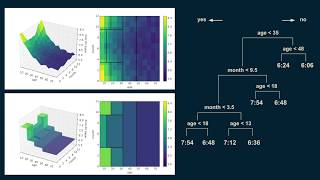

How decision trees work

Brandon Rohrer

Data scientist archetypes

Brandon Rohrer

How to use python's datetime package

Brandon Rohrer

How optimization for machine learning works, part 1

Brandon Rohrer

How optimization for machine learning works, part 2

Brandon Rohrer

How optimization for machine learning works, part 3

Brandon Rohrer

How optimization for machine learning works, part 4

Brandon Rohrer

How convolutional neural networks work, in depth

Brandon Rohrer

How to pick a machine learning model 4: Splitting the data

Brandon Rohrer

How to pick a machine learning model 3: Choosing a loss function

Brandon Rohrer

How to pick a machine learning model 2: Separating signal from noise

Brandon Rohrer

How to pick a machine learning model 1: Choosing between models

Brandon Rohrer

How to pick a machine learning model 5: Navigating assumptions

Brandon Rohrer

What do neural networks learn?

Brandon Rohrer

Interview with iRobot's Director of Data Science Angela Bassa

Brandon Rohrer

How Backpropagation Works

Brandon Rohrer

Evolutionary Powell's method: A discrete optimizer for hyperparameter optimization

Brandon Rohrer

1D convolution for neural networks, part 1: Sliding dot product

Brandon Rohrer

1D convolution for neural networks, part 2: Convolution copies the kernel

Brandon Rohrer

1D convolution for neural networks, part 3: Sliding dot product equations longhand

Brandon Rohrer

1D convolution for neural networks, part 4: Convolution equation

Brandon Rohrer

1D convolution for neural networks, part 5: Backpropagation

Brandon Rohrer

1D convolution for neural networks, part 6: Input gradient

Brandon Rohrer

1D convolution for neural networks, part 7: Weight gradient

Brandon Rohrer

1D convolution for neural networks, part 8: Padding

Brandon Rohrer

1D convolution for neural networks, part 9: Stride

Brandon Rohrer

The Four Grand Challenges of Robots in the Home

Brandon Rohrer

How Convolution Works

Brandon Rohrer

The Softmax neural network layer

Brandon Rohrer

Batch normalization

Brandon Rohrer

Getting ready to learn Python, Mac edition #1: Files and directories

Brandon Rohrer

⚡

AI Lesson Summary

✦ V3 skills

⚖ Mixed

This video teaches how convolution works in two dimensions for convolutional neural networks and image processing. It covers the basics of convolution, including how to reverse the kernel, move it over the image, and calculate the feature map. By the end of this video, you will understand how to apply convolution to images and extract features from them.

Key Takeaways

- Reverse the kernel by flipping it top to bottom and left to right

- Move the reversed kernel over the image, element by element, multiplying corresponding pixel values and adding up the results

- Repeat the process for all rows and columns of the image to produce a feature map or a scaled-down version of the original image

- Use zero padding to make the result the same size as the original image, if desired

- Implement convolutional neural network and calculate gradients for back propagation

💡 The convolution operation can be used to extract features from an image, such as edges, lines, or shapes, and can be used in a variety of applications, including image classification, object detection, and image segmentation.

More on: ML Maths Basics

View skill →Related AI Lessons

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI