Using Machine Learning to Predict NBA Games (Student Presentation, Group 22)

This is a STAT 451 class project presentation

by Almann Brague

This presentation is shared with the students' permission.

Abstract:

I use machine learning algorithms to predicted the out- come of NBA basketball games. As someone who has enjoyed the game of basketball all of my life, both as a participant and a fan. I hope through this project to find a machine learning algorithm to predict for wins and losses of NBA games. For this I have found statistics from NBA games from the years 2012 to 2018. I have assigned these statistics to each individual team to allow me to pre- dict which team would win against each other. I trained this on the years 2012-2017 and tested it on the most recent years of 2017-2018. The models that were used included, K-Nearest Neighbors (KNN), Decision Trees, Random Forests, and Gradient Boosting Classifiers. To find which model was best suited to create an accurate prediction I used a Nested Cross-Validation. This com- pared the four different algorithms together while also searching for their best hyper parameters. From this when apply the hyper parameters to each model that were best suited for prediction. I achieved prediction accuracy, on the training set, ranging from 57.04 percent to 65.76 per- cent. The best model from the the training set accuracy was the Gradient Boosting. Then when testing the Gradient Boosting method with specific hyper parameters on the test set it achieved a test accuracy of 65.89 percent.

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from Sebastian Raschka · Sebastian Raschka · 0 of 60

← Previous

Next →

1

![Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]](https://i.ytimg.com/vi/9eH1SAs8K3o/mqdefault.jpg) 2

2

![Intro to Deep Learning -- L09 Regularization [Stat453, SS20]](https://i.ytimg.com/vi/KwaxQKiLkFY/mqdefault.jpg) 3

3

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/QQD9Y2FiotQ/mqdefault.jpg) 4

4

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/H_hrdUUrjho/mqdefault.jpg) 5

5

![Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]](https://i.ytimg.com/vi/MyWwxEHC5zE/mqdefault.jpg) 6

6

![Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]](https://i.ytimg.com/vi/7ftuaShIzhc/mqdefault.jpg) 7

7

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/mZmyp0JjH6s/mqdefault.jpg) 8

8

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/ji05GxulVuY/mqdefault.jpg) 9

9

![Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]](https://i.ytimg.com/vi/tFWex9e-sg8/mqdefault.jpg) 10

10

![Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]](https://i.ytimg.com/vi/iddlDHXDxc0/mqdefault.jpg) 11

11

![Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]](https://i.ytimg.com/vi/aka29GqbsEM/mqdefault.jpg) 12

12

![Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]](https://i.ytimg.com/vi/e_I0q3mmfw4/mqdefault.jpg) 13

13

14

14

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

33

33

34

34

35

35

36

36

37

37

38

38

39

39

40

40

41

41

42

42

43

43

44

44

45

45

46

46

47

47

48

48

49

49

50

50

51

51

52

52

53

53

54

54

55

55

56

56

57

57

58

58

59

59

60

60

![Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]](https://i.ytimg.com/vi/9eH1SAs8K3o/mqdefault.jpg)

Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L09 Regularization [Stat453, SS20]](https://i.ytimg.com/vi/KwaxQKiLkFY/mqdefault.jpg)

Intro to Deep Learning -- L09 Regularization [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/QQD9Y2FiotQ/mqdefault.jpg)

Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/H_hrdUUrjho/mqdefault.jpg)

Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]](https://i.ytimg.com/vi/MyWwxEHC5zE/mqdefault.jpg)

Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]](https://i.ytimg.com/vi/7ftuaShIzhc/mqdefault.jpg)

Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/mZmyp0JjH6s/mqdefault.jpg)

Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/ji05GxulVuY/mqdefault.jpg)

Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]](https://i.ytimg.com/vi/tFWex9e-sg8/mqdefault.jpg)

Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]](https://i.ytimg.com/vi/iddlDHXDxc0/mqdefault.jpg)

Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]](https://i.ytimg.com/vi/aka29GqbsEM/mqdefault.jpg)

Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]](https://i.ytimg.com/vi/e_I0q3mmfw4/mqdefault.jpg)

Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]

Sebastian Raschka

1.2 What is Machine Learning (L01: What is Machine Learning)

Sebastian Raschka

1.3 Categories of Machine Learning (L01: What is Machine Learning)

Sebastian Raschka

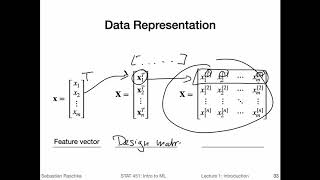

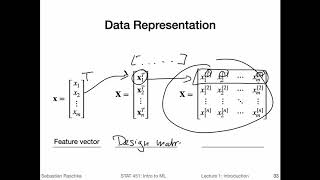

1.4 Notation (L01: What is Machine Learning)

Sebastian Raschka

1.1 Course overview (L01: What is Machine Learning)

Sebastian Raschka

1.5 ML application (L01: What is Machine Learning)

Sebastian Raschka

1.6 ML motivation (L01: What is Machine Learning)

Sebastian Raschka

2.1 Introduction to NN (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.2 Nearest neighbor decision boundary (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.3 K-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

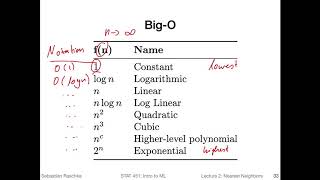

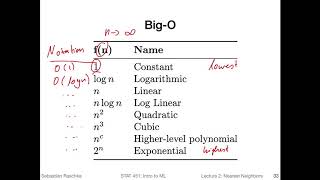

2.4 Big O of K-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.5 Improving k-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.6 K-nearest neighbors in Python (L02: Nearest Neighbor Methods)

Sebastian Raschka

3.1 (Optional) Python overview

Sebastian Raschka

3.2 (Optional) Python setup

Sebastian Raschka

3.3 (Optional) Running Python code

Sebastian Raschka

4.1 Intro to NumPy (L04: Scientific Computing in Python)

Sebastian Raschka

4.2 NumPy Array Construction and Indexing (L04: Scientific Computing in Python)

Sebastian Raschka

4.4 NumPy Broadcasting (L04: Scientific Computing in Python)

Sebastian Raschka

4.5 NumPy Advanced Indexing -- Memory Views and Copies (L04: Scientific Computing in Python)

Sebastian Raschka

4.3 NumPy Array Math and Universal Functions (L04: Scientific Computing in Python)

Sebastian Raschka

4.7 Reshaping NumPy Arrays (L04: Scientific Computing in Python)

Sebastian Raschka

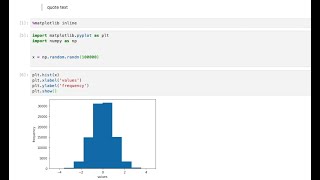

4.6 NumPy Random Number Generators (L04: Scientific Computing in Python)

Sebastian Raschka

4.8 NumPy Comparison Operators and Masks (L04: Scientific Computing in Python)

Sebastian Raschka

4.9 NumPy Linear Algebra Basics (L04: Scientific Computing in Python)

Sebastian Raschka

4.10 Matplotlib (L04: Scientific Computing in Python)

Sebastian Raschka

5.1 Reading a Dataset from a Tabular Text File (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.2 Basic data handling (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.3 Object Oriented Programming & Python Classes (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.4 Intro to Scikit-learn (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.5 Scikit-learn Transformer API (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

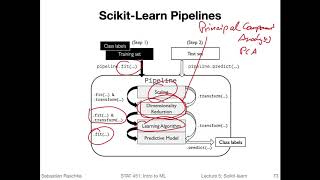

5.6 Scikit-learn Pipelines (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

6.1 Intro to Decision Trees (L06: Decision Trees)

Sebastian Raschka

6.2 Recursive algorithms & Big-O (L06: Decision Trees)

Sebastian Raschka

6.3 Types of decision trees (L06: Decision Trees)

Sebastian Raschka

6.5 Gini & Entropy versus misclassification error (L06: Decision Trees)

Sebastian Raschka

6.6 Improvements & dealing with overfitting (L06: Decision Trees)

Sebastian Raschka

6.7 Code Example Implementing Decision Trees in Scikit-Learn (L06: Decision Trees)

Sebastian Raschka

7.1 Intro to ensemble methods (L07: Ensemble Methods)

Sebastian Raschka

7.2 Majority Voting (L07: Ensemble Methods)

Sebastian Raschka

7.3 Bagging (L07: Ensemble Methods)

Sebastian Raschka

7.4 Boosting and AdaBoost (L07: Ensemble Methods)

Sebastian Raschka

7.5 Gradient Boosting (L07: Ensemble Methods)

Sebastian Raschka

7.6 Random Forests (L07: Ensemble Methods)

Sebastian Raschka

7.7 Stacking (L07: Ensemble Methods)

Sebastian Raschka

8.1 Intro to overfitting and underfitting (L08: Model Evaluation Part 1)

Sebastian Raschka

8.2 Intuition behind bias and variance (L08: Model Evaluation Part 1)

Sebastian Raschka

8.3 Bias-Variance Decomposition of the Squared Error (L08: Model Evaluation Part 1)

Sebastian Raschka

8.4 Bias and Variance vs Overfitting and Underfitting (L08: Model Evaluation Part 1)

Sebastian Raschka

More on: Supervised Learning

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Confusion Matrix Explained Using Random Forest

Medium · Python

When Preprocessing Helps — and When It Hurts: Why Your Image Classification Model’s Accuracy Varies

Medium · Machine Learning

Can someone without early coding or olympiad background succeed in CS?”

Reddit r/learnprogramming

Week 4, episode 2 — The Pro-Level AI Playbook Your Python Bootcamp Skipped

Medium · Data Science

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI