Machine Learning-Based Authorship Identification in Web Fictions (Student Presentation, Group 17)

This is a STAT 451 class project presentation

by Fangying Zhan, Weijia Cao, and Yuan Tian

This presentation is shared with the students' permission.

Abstract:

To tell the authors of online web fictions from analyzing the text data using snippets cut from those works, we use machine-learning algorithms to try and identify differ- ent authors’ writing styles. We collected data from Fan- Fiction.Net, the most popular online archive of fan fictions. Four authors’ chapters of fan fictions based off of Inception, Harry Potter, Avengers, and Naruto were cut into 851 snippets to constitute our dataset. All the text data were preprocessed using tokenization, lowercasing, and lemmatization. We compared two approaches of preprocessing the text data: LSM words + punctuations as features and stop word removal, two approaches of train-test splitting: a random 80%-20% split and a split based on themes, and two approaches for feature extraction: bag-of-words and tf-idf models. We employed multinomial naive Bayes classifier, which is empirically proven suitable and effective in its performance with tackling text data. We explored the performance of different approaches under 4 different scenarios to find the best setting for our model. Under Inception-non- Inception splitting, bag-of-words with multinomial naive Bayes classifier gave us the most reasonable and improved results to identify an author’s writing style.

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from Sebastian Raschka · Sebastian Raschka · 0 of 60

← Previous

Next →

1

![Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]](https://i.ytimg.com/vi/9eH1SAs8K3o/mqdefault.jpg) 2

2

![Intro to Deep Learning -- L09 Regularization [Stat453, SS20]](https://i.ytimg.com/vi/KwaxQKiLkFY/mqdefault.jpg) 3

3

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/QQD9Y2FiotQ/mqdefault.jpg) 4

4

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/H_hrdUUrjho/mqdefault.jpg) 5

5

![Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]](https://i.ytimg.com/vi/MyWwxEHC5zE/mqdefault.jpg) 6

6

![Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]](https://i.ytimg.com/vi/7ftuaShIzhc/mqdefault.jpg) 7

7

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/mZmyp0JjH6s/mqdefault.jpg) 8

8

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/ji05GxulVuY/mqdefault.jpg) 9

9

![Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]](https://i.ytimg.com/vi/tFWex9e-sg8/mqdefault.jpg) 10

10

![Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]](https://i.ytimg.com/vi/iddlDHXDxc0/mqdefault.jpg) 11

11

![Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]](https://i.ytimg.com/vi/aka29GqbsEM/mqdefault.jpg) 12

12

![Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]](https://i.ytimg.com/vi/e_I0q3mmfw4/mqdefault.jpg) 13

13

14

14

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

33

33

34

34

35

35

36

36

37

37

38

38

39

39

40

40

41

41

42

42

43

43

44

44

45

45

46

46

47

47

48

48

49

49

50

50

51

51

52

52

53

53

54

54

55

55

56

56

57

57

58

58

59

59

60

60

![Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]](https://i.ytimg.com/vi/9eH1SAs8K3o/mqdefault.jpg)

Intro to Deep Learning -- L06.5 Cloud Computing [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L09 Regularization [Stat453, SS20]](https://i.ytimg.com/vi/KwaxQKiLkFY/mqdefault.jpg)

Intro to Deep Learning -- L09 Regularization [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/QQD9Y2FiotQ/mqdefault.jpg)

Intro to Deep Learning -- L10 Input and Weight Normalization Part 1/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/H_hrdUUrjho/mqdefault.jpg)

Intro to Deep Learning -- L10 Input and Weight Normalization Part 2/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]](https://i.ytimg.com/vi/MyWwxEHC5zE/mqdefault.jpg)

Intro to Deep Learning -- L11 Common Optimization Algorithms [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]](https://i.ytimg.com/vi/7ftuaShIzhc/mqdefault.jpg)

Intro to Deep Learning -- L12 Intro to Convolutional Neural Networks (Part 1) [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]](https://i.ytimg.com/vi/mZmyp0JjH6s/mqdefault.jpg)

Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 1/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]](https://i.ytimg.com/vi/ji05GxulVuY/mqdefault.jpg)

Intro to Deep Learning -- L13 Intro to Convolutional Neural Networks (Part 2) 2/2 [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]](https://i.ytimg.com/vi/tFWex9e-sg8/mqdefault.jpg)

Intro to Deep Learning -- L14 Intro to Recurrent Neural Networks [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]](https://i.ytimg.com/vi/iddlDHXDxc0/mqdefault.jpg)

Intro to Deep Learning -- L15 Autoencoders [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]](https://i.ytimg.com/vi/aka29GqbsEM/mqdefault.jpg)

Intro to Deep Learning -- L16 Generative Adversarial Networks [Stat453, SS20]

Sebastian Raschka

![Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]](https://i.ytimg.com/vi/e_I0q3mmfw4/mqdefault.jpg)

Intro to Deep Learning -- Student Presentations, Day 1 [Stat453, SS20]

Sebastian Raschka

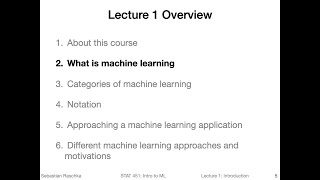

1.2 What is Machine Learning (L01: What is Machine Learning)

Sebastian Raschka

1.3 Categories of Machine Learning (L01: What is Machine Learning)

Sebastian Raschka

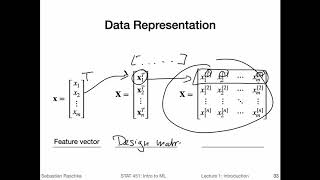

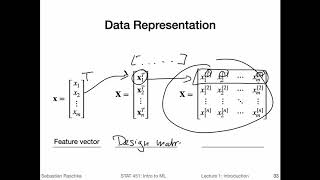

1.4 Notation (L01: What is Machine Learning)

Sebastian Raschka

1.1 Course overview (L01: What is Machine Learning)

Sebastian Raschka

1.5 ML application (L01: What is Machine Learning)

Sebastian Raschka

1.6 ML motivation (L01: What is Machine Learning)

Sebastian Raschka

2.1 Introduction to NN (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.2 Nearest neighbor decision boundary (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.3 K-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

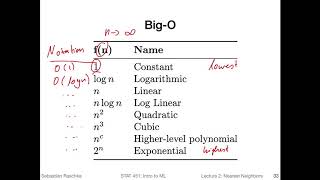

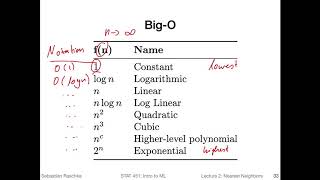

2.4 Big O of K-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.5 Improving k-nearest neighbors (L02: Nearest Neighbor Methods)

Sebastian Raschka

2.6 K-nearest neighbors in Python (L02: Nearest Neighbor Methods)

Sebastian Raschka

3.1 (Optional) Python overview

Sebastian Raschka

3.2 (Optional) Python setup

Sebastian Raschka

3.3 (Optional) Running Python code

Sebastian Raschka

4.1 Intro to NumPy (L04: Scientific Computing in Python)

Sebastian Raschka

4.2 NumPy Array Construction and Indexing (L04: Scientific Computing in Python)

Sebastian Raschka

4.4 NumPy Broadcasting (L04: Scientific Computing in Python)

Sebastian Raschka

4.5 NumPy Advanced Indexing -- Memory Views and Copies (L04: Scientific Computing in Python)

Sebastian Raschka

4.3 NumPy Array Math and Universal Functions (L04: Scientific Computing in Python)

Sebastian Raschka

4.7 Reshaping NumPy Arrays (L04: Scientific Computing in Python)

Sebastian Raschka

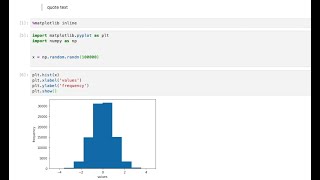

4.6 NumPy Random Number Generators (L04: Scientific Computing in Python)

Sebastian Raschka

4.8 NumPy Comparison Operators and Masks (L04: Scientific Computing in Python)

Sebastian Raschka

4.9 NumPy Linear Algebra Basics (L04: Scientific Computing in Python)

Sebastian Raschka

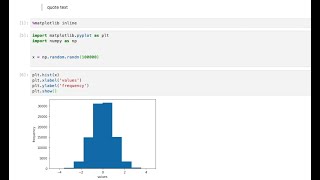

4.10 Matplotlib (L04: Scientific Computing in Python)

Sebastian Raschka

5.1 Reading a Dataset from a Tabular Text File (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.2 Basic data handling (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.3 Object Oriented Programming & Python Classes (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.4 Intro to Scikit-learn (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

5.5 Scikit-learn Transformer API (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

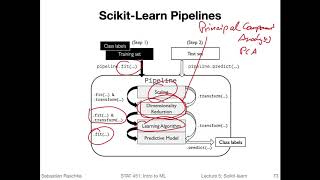

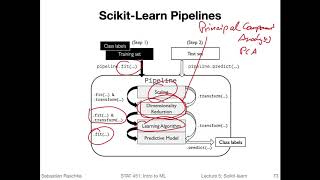

5.6 Scikit-learn Pipelines (L05: Machine Learning with Scikit-Learn)

Sebastian Raschka

6.1 Intro to Decision Trees (L06: Decision Trees)

Sebastian Raschka

6.2 Recursive algorithms & Big-O (L06: Decision Trees)

Sebastian Raschka

6.3 Types of decision trees (L06: Decision Trees)

Sebastian Raschka

6.5 Gini & Entropy versus misclassification error (L06: Decision Trees)

Sebastian Raschka

6.6 Improvements & dealing with overfitting (L06: Decision Trees)

Sebastian Raschka

6.7 Code Example Implementing Decision Trees in Scikit-Learn (L06: Decision Trees)

Sebastian Raschka

7.1 Intro to ensemble methods (L07: Ensemble Methods)

Sebastian Raschka

7.2 Majority Voting (L07: Ensemble Methods)

Sebastian Raschka

7.3 Bagging (L07: Ensemble Methods)

Sebastian Raschka

7.4 Boosting and AdaBoost (L07: Ensemble Methods)

Sebastian Raschka

7.5 Gradient Boosting (L07: Ensemble Methods)

Sebastian Raschka

7.6 Random Forests (L07: Ensemble Methods)

Sebastian Raschka

7.7 Stacking (L07: Ensemble Methods)

Sebastian Raschka

8.1 Intro to overfitting and underfitting (L08: Model Evaluation Part 1)

Sebastian Raschka

8.2 Intuition behind bias and variance (L08: Model Evaluation Part 1)

Sebastian Raschka

8.3 Bias-Variance Decomposition of the Squared Error (L08: Model Evaluation Part 1)

Sebastian Raschka

8.4 Bias and Variance vs Overfitting and Underfitting (L08: Model Evaluation Part 1)

Sebastian Raschka

Related AI Lessons

⚡

⚡

⚡

⚡

I Tried 10 ChatGPT Resume Prompts. Here's What Actually Got Me Interviews.

Dev.to AI

How does indirect prompt injection work? #tech

Dev.to AI

A Unified View of AI Evolution: From Machine Learning to LLMs, RAG, and Fine-Tuning

Dev.to · Naimul Karim

OpenAI Just Unleashed GPT-5.5 — And It Signals the Next Phase of AI

Medium · AI

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI