Building an AI Judge: The Most Powerful (and Dangerous) Way to Evaluate LLMs

How do you test if an LLM is actually "friendly"? You can’t run pytest.assert(email.is_friendly). This is the evaluation crisis every AI engineer faces: for human-centric tasks, classic metrics fail.

In this deep-dive, we build an AI Judge—an LLM that evaluates other LLMs—and show you how to make it trustworthy.

You'll learn:

✅ The 3 components of a production-ready judge prompt (Task, Criteria, Scoring).

✅ How to write model-agnostic Python code using OpenRouter.

✅ A simple swap test to detect and mitigate the 3 critical biases (Position, Verbosity, Self-Preference).

✅ A 4-step safety checklist for using AI Judges in production.

✅ When NOT to use AI Judges (and what to do instead).

We also cover crucial settings like temperature=0 to ensure your judge's consistency.

🔗 Full Demo on GitHub: https://github.com/LLM-Implementation/Practical-LLM-Implementation/tree/main/AI-Engineering/demo/ai_judge

🧪 Models Used: xAI's Grok via OpenRouter (works with any of their 200+ models).

D. Chapter Timestamps

00:00 - The Test You Can't Write: The Evaluation Crisis

00:32 - The Most Powerful (and Dangerous) Tool

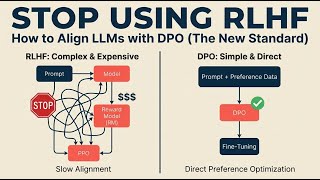

01:00 - The 3 Biases of AI Judges

01:29 - The 3 Pillars of a Perfect Judge Prompt

02:20 - DEMO: Building Our Judge with OpenRouter

04:10 - DEMO: The Moment of Truth - Testing for Bias

05:08 - The 4 Rules for Using AI Judges Safely

💬 What's your biggest AI testing challenge right now? Drop a comment!

🔔 **Subscribe for practical AI insights** - we're breaking down how modern AI actually works, one video at a time.

This presentation is inspired by the core concepts in the book "AI Engineering" by Chip Huyen. If you want a deeper dive into these topics, I highly recommend checking it out.

🎓 Join our FREE AI Engineering Community on Discord: https://discord.gg/KpnJQbgpjt

#AIEvaluation #PythonTutorial #LLMTesting

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: AI Alignment Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Project Glasswing Explained: Anthropic’s Push for Defensive Cybersecurity in the AI Era

Dev.to · softpyramid

A Yale ethicist who has studied AI for 25 years says the real danger isn’t superintelligence. It’s the absence of moral intelligence.

Dev.to AI

Massive Layoffs, Meta Surveillance, DeepSeek-V4 in AI News

AI Supremacy

We Open-Sourced Our Prompt Defense Scanner: 200 Lines of Regex That Replace an LLM

Dev.to · ppcvote

Chapters (7)

The Test You Can't Write: The Evaluation Crisis

0:32

The Most Powerful (and Dangerous) Tool

1:00

The 3 Biases of AI Judges

1:29

The 3 Pillars of a Perfect Judge Prompt

2:20

DEMO: Building Our Judge with OpenRouter

4:10

DEMO: The Moment of Truth - Testing for Bias

5:08

The 4 Rules for Using AI Judges Safely

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI