ALiBi - Train Short, Test Long: Attention with linear biases enables input length extrapolation

#alibi #transformers #attention

Transformers are essentially set models that need additional inputs to make sense of sequence data. The most widespread additional inputs are position encodings or position embeddings, which add sequence index information in various forms. However, this has put a limit on the resulting model, which cannot run inference on sequences longer than it has been trained on, as it would encounter unfamiliar position encodings. ALiBi solves this by proposing simple linear fixed biases as position information, adding negligible overhead in time and memory, but surprisingly, the resulting model is able to handle inference on sequences many times as long as its training sequences.

OUTLINE:

0:00 - Intro & Overview

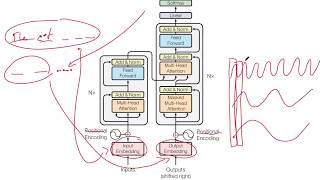

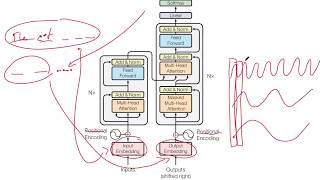

1:40 - Position Encodings in Transformers

4:55 - Sinusoidial Position Encodings

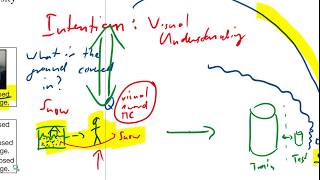

11:50 - ALiBi Position Encodings

20:50 - How to choose the slope parameter

23:55 - Experimental Results

29:10 - Comments & Conclusion

Paper: https://ofir.io/train_short_test_long.pdf

Code: https://github.com/ofirpress/attention_with_linear_biases

Abstract:

Since the introduction of the transformer model by Vaswani et al. (2017), a fundamental question remains open: how to achieve extrapolation at inference time to longer sequences than seen during training? We first show that extrapolation can be improved by changing the position representation method, though we find that existing proposals do not allow efficient extrapolation. We introduce a simple and efficient method, Attention with Linear Biases (ALiBi), that allows for extrapolation. ALiBi does not add positional embeddings to the word embeddings; instead, it biases the query-key attention scores with a term that is proportional to their distance. We show that this method allows training a 1.3 billion parameter model on input sequences of length 1024 that extrapolates to input sequences of length 2048, achieving the same perplexity as a sinusoidal position embedding model trained on inputs of length

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from Yannic Kilcher · Yannic Kilcher · 0 of 60

← Previous

Next →

1

2

2

3

3

4

4

5

5

6

6

7

7

8

8

9

9

10

10

11

11

12

12

13

13

14

14

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

33

33

34

34

35

35

![[News] The Siraj Raval Controversy](https://i.ytimg.com/vi/BK3rv0MQMwY/mqdefault.jpg) 36

36

37

37

38

38

39

39

40

40

41

41

42

42

43

43

44

44

45

45

46

46

47

47

![[Interview] Mark Ledwich - Algorithmic Extremism: Examining YouTube's Rabbit Hole of Radicalization](https://i.ytimg.com/vi/vB_hQ5NmtPs/mqdefault.jpg) 48

48

49

49

50

50

51

51

52

52

53

53

![[Rant] coronavirus](https://i.ytimg.com/vi/wAgO2WZzjn4/mqdefault.jpg) 54

54

55

55

56

56

57

57

58

58

59

59

60

60

![[Drama] Who invented Contrast Sets?](https://i.ytimg.com/vi/DRy_Mr732yA/mqdefault.jpg)

Imagination-Augmented Agents for Deep Reinforcement Learning

Yannic Kilcher

Learning model-based planning from scratch

Yannic Kilcher

Reinforcement Learning with Unsupervised Auxiliary Tasks

Yannic Kilcher

Attention Is All You Need

Yannic Kilcher

git for research basics: fundamentals, commits, branches, merging

Yannic Kilcher

Curiosity-driven Exploration by Self-supervised Prediction

Yannic Kilcher

World Models

Yannic Kilcher

Challenging Common Assumptions in the Unsupervised Learning of Disentangled Representations

Yannic Kilcher

Stochastic RNNs without Teacher-Forcing

Yannic Kilcher

What’s in a name? The need to nip NIPS

Yannic Kilcher

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding

Yannic Kilcher

Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift

Yannic Kilcher

GPT-2: Language Models are Unsupervised Multitask Learners

Yannic Kilcher

Neural Ordinary Differential Equations

Yannic Kilcher

The Odds are Odd: A Statistical Test for Detecting Adversarial Examples

Yannic Kilcher

Discriminating Systems - Gender, Race, and Power in AI

Yannic Kilcher

Blockwise Parallel Decoding for Deep Autoregressive Models

Yannic Kilcher

S.H.E. - Search. Human. Equalizer.

Yannic Kilcher

Reinforcement Learning, Fast and Slow

Yannic Kilcher

Adversarial Examples Are Not Bugs, They Are Features

Yannic Kilcher

I'm at ICML19 :)

Yannic Kilcher

Population-Based Search and Open-Ended Algorithms

Yannic Kilcher

XLNet: Generalized Autoregressive Pretraining for Language Understanding

Yannic Kilcher

Conversation about Population-Based Methods (Re-upload)

Yannic Kilcher

Reconciling modern machine learning and the bias-variance trade-off

Yannic Kilcher

Learning World Graphs to Accelerate Hierarchical Reinforcement Learning

Yannic Kilcher

Manifold Mixup: Better Representations by Interpolating Hidden States

Yannic Kilcher

Processing Megapixel Images with Deep Attention-Sampling Models

Yannic Kilcher

Gauge Equivariant Convolutional Networks and the Icosahedral CNN

Yannic Kilcher

Auditing Radicalization Pathways on YouTube

Yannic Kilcher

RoBERTa: A Robustly Optimized BERT Pretraining Approach

Yannic Kilcher

Dynamic Routing Between Capsules

Yannic Kilcher

DEEP LEARNING MEME REVIEW - Episode 1

Yannic Kilcher

Accelerating Deep Learning by Focusing on the Biggest Losers

Yannic Kilcher

![[News] The Siraj Raval Controversy](https://i.ytimg.com/vi/BK3rv0MQMwY/mqdefault.jpg)

[News] The Siraj Raval Controversy

Yannic Kilcher

LeDeepChef 👨🍳 Deep Reinforcement Learning Agent for Families of Text-Based Games

Yannic Kilcher

The Visual Task Adaptation Benchmark

Yannic Kilcher

IMPALA: Scalable Distributed Deep-RL with Importance Weighted Actor-Learner Architectures

Yannic Kilcher

AlphaStar: Grandmaster level in StarCraft II using multi-agent reinforcement learning

Yannic Kilcher

SinGAN: Learning a Generative Model from a Single Natural Image

Yannic Kilcher

A neurally plausible model learns successor representations in partially observable environments

Yannic Kilcher

MuZero: Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model

Yannic Kilcher

Reinforcement Learning Upside Down: Don't Predict Rewards -- Just Map Them to Actions

Yannic Kilcher

NeurIPS 19 Poster Session

Yannic Kilcher

Go-Explore: a New Approach for Hard-Exploration Problems

Yannic Kilcher

Reformer: The Efficient Transformer

Yannic Kilcher

![[Interview] Mark Ledwich - Algorithmic Extremism: Examining YouTube's Rabbit Hole of Radicalization](https://i.ytimg.com/vi/vB_hQ5NmtPs/mqdefault.jpg)

[Interview] Mark Ledwich - Algorithmic Extremism: Examining YouTube's Rabbit Hole of Radicalization

Yannic Kilcher

Turing-NLG, DeepSpeed and the ZeRO optimizer

Yannic Kilcher

Growing Neural Cellular Automata

Yannic Kilcher

NeurIPS 2020 Changes to Paper Submission Process

Yannic Kilcher

Deep Learning for Symbolic Mathematics

Yannic Kilcher

Online Education - How I Make My Videos

Yannic Kilcher

![[Rant] coronavirus](https://i.ytimg.com/vi/wAgO2WZzjn4/mqdefault.jpg)

[Rant] coronavirus

Yannic Kilcher

Axial Attention & MetNet: A Neural Weather Model for Precipitation Forecasting

Yannic Kilcher

Agent57: Outperforming the Atari Human Benchmark

Yannic Kilcher

State-of-Art-Reviewing: A Radical Proposal to Improve Scientific Publication

Yannic Kilcher

Dream to Control: Learning Behaviors by Latent Imagination

Yannic Kilcher

POET: Endlessly Generating Increasingly Complex and Diverse Learning Environments and Solutions

Yannic Kilcher

Evaluating NLP Models via Contrast Sets

Yannic Kilcher

![[Drama] Who invented Contrast Sets?](https://i.ytimg.com/vi/DRy_Mr732yA/mqdefault.jpg)

[Drama] Who invented Contrast Sets?

Yannic Kilcher

More on: LLM Engineering

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

GPT-5.5 Pro Sustains 2-Hour Bug Fixing Sessions

Dev.to AI

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to AI

The ICCSSE Framework: How to Write AI Prompts That Actually Work

Dev.to AI

The Four Techniques That Make Claude Actually Listen to You

Medium · LLM

Chapters (7)

Intro & Overview

1:40

Position Encodings in Transformers

4:55

Sinusoidial Position Encodings

11:50

ALiBi Position Encodings

20:50

How to choose the slope parameter

23:55

Experimental Results

29:10

Comments & Conclusion

🎓

Tutor Explanation

![FULLY LOCAL Mistral AI PDF Processing [Hands-on Tutorial]](https://i.ytimg.com/vi/wZDVgy_14PE/mqdefault.jpg)

DeepCamp AI

DeepCamp AI