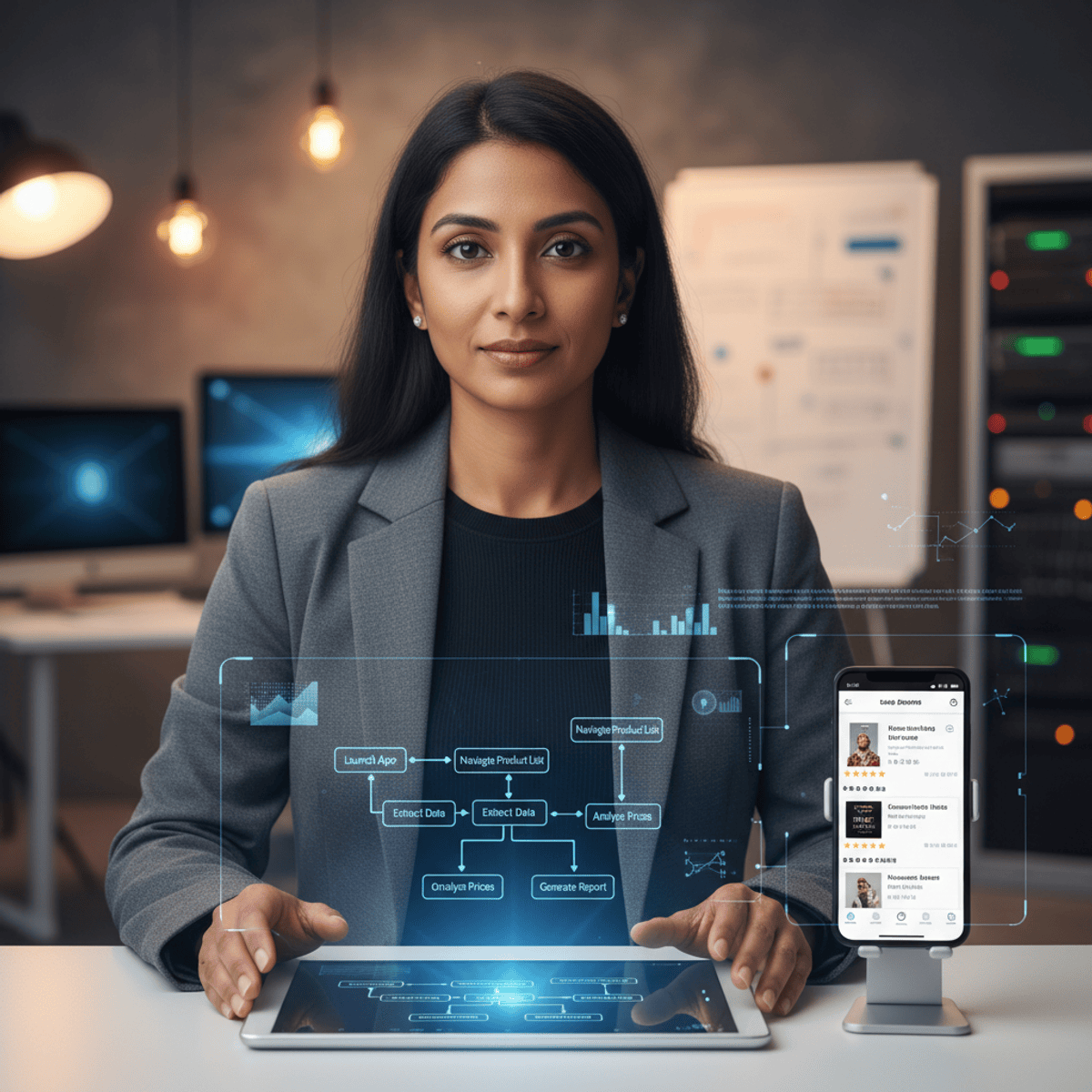

AI-Powered Data Pipelines with Deno

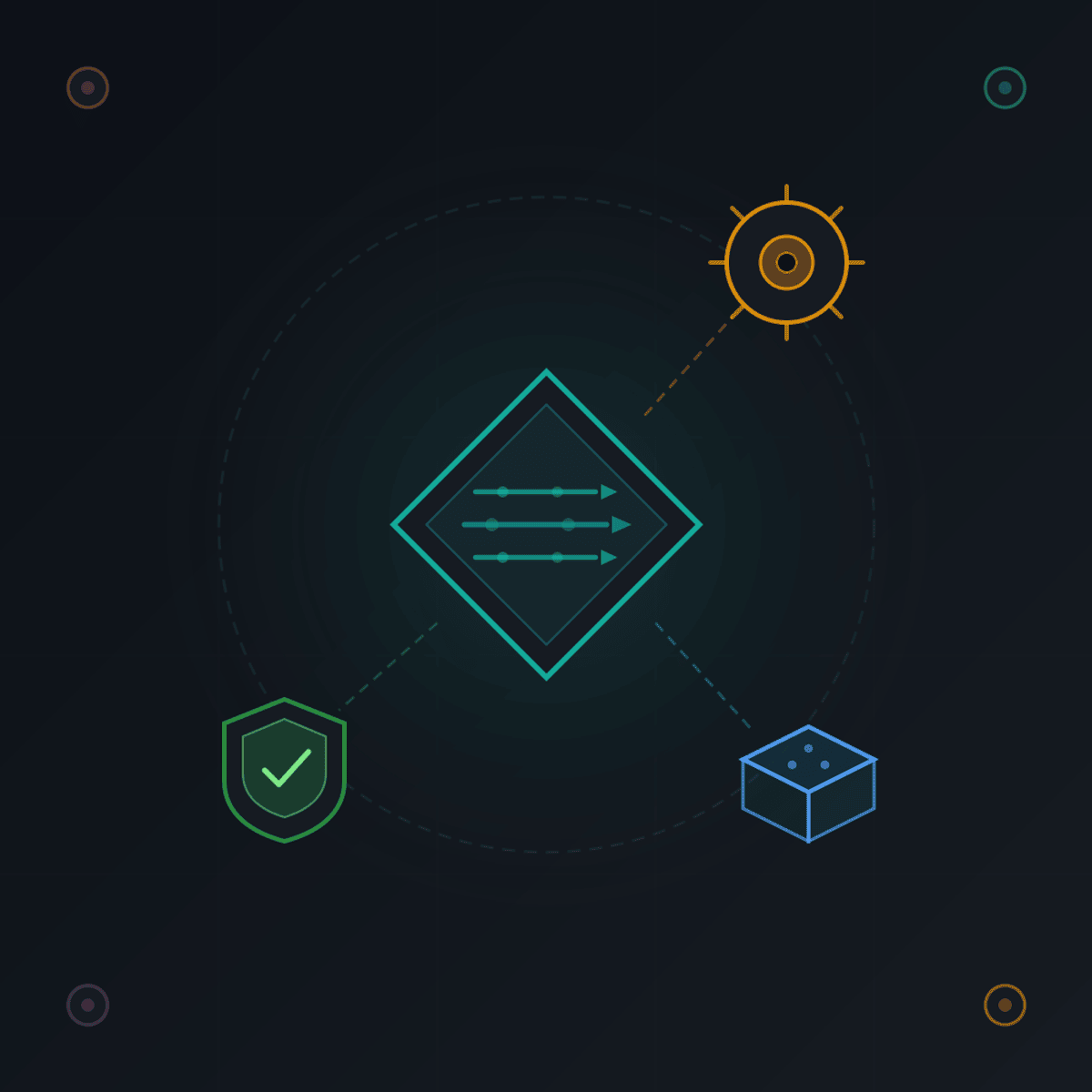

Learn to build AI-powered data pipelines using Deno, a modern JavaScript and TypeScript runtime with built-in security and developer tooling. You will explore roadmap-driven development with agentic AI for automated project planning, and implement git pre-commit hooks and quality gates that enforce code standards before commits enter the repository. The course covers the Deno ecosystem including its module system with URL-based imports, standard library, and the distinction between proactive and reactive toolchains demonstrated through Deno and Ruchy comparisons. You will build data engineering workflows using the Deno task system, configuring task automation through deno.json for repeatable data processing pipelines. The task playbooks module demonstrates composing multiple tasks into end-to-end data pipelines and executing them with hands-on demonstrations. The production tooling module covers Deno compile for creating standalone executable binaries that run without a runtime installation, Deno doc for generating API documentation directly from TypeScript types, and Deno vendor for caching remote dependencies locally to ensure reproducible offline builds. By completing this course, you will be able to design, build, and deploy AI-powered data pipelines using Deno's built-in task system, compile to standalone binaries, and vendor dependencies for production reliability.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: AI Workflow Automation

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Legacy Migration To New AI-Native Worlds

Forbes Innovation

I audited my own Claude Code setup and found 21 issues in 72 artifacts

Dev.to AI

What an event-driven agent pipeline looks like when you trace it end-to-end

Dev.to AI

Figure Is Doubling Humanoid Robot Deliveries Every Month

Forbes Innovation

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI